MagVin Lab v3.0: Starting Over with Full Control

MagVin Lab v3 began as a refusal to keep patching systems I could not trust. FastAPI backend, React frontend, containerized microservices, and a sovereign doctrine codified from earlier failures: no Python on the host, no mocked data, no dependencies that vanish on reboot. The result is incomplete but stable, legible, and real.

MAGVIN LAB

Lance

12/15/20255 min read

Screenshots from MagVin Lab v3.0

Context

NiceGUI lasted an afternoon.

The framework solved the technical problem that killed Version 1: WebSockets eliminated Streamlit's full-page reloads, and telemetry updates were delivered without flicker. When I saw the output, I immediately knew the framework could integrate with the backend but could never deliver the interface I wanted. The gauges were plain white rings. The layout felt boxy and uniform. It was good. It was not good enough.

Version 3.0 began the same day. If no existing framework could deliver both the technical foundation and the visual authority I required, then I would build the interface myself. FastAPI for the backend, React for the frontend, and complete control over every pixel.

The Architecture

The decision to start over carried a requirement: the new architecture had to justify the reset. FastAPI provided explicit, typed endpoints that let me inspect exactly what data flowed between the frontend and backend. React treated the interface as a continuously updating state machine, which meant I controlled rendering behaviour directly rather than negotiating with framework abstractions.

The stack formalised into a containerised sovereign grid:

magvin-cortex: The central orchestrator running on Python 3.10 Slim, responsible for querying Ollama, verifying model presence on disk, checking container DNS, and returning unified status to the frontend.

magvin-voice: Kokoro TTS isolated as a dedicated microservice for text-to-speech processing.

magvin-ears: Whisper V3 isolated for speech recognition, kept separate so an audio crash could never take down the central brain.

Chroma and Neo4j: Vector database and graph database working in tandem for retrieval-augmented generation (RAG) and knowledge visualisation.

Isolating audio into separate containers followed a lesson from earlier versions: heavy processes that might fail should never share memory space with the orchestrator. If Kokoro crashes, the system logs the failure and continues. The cortex keeps running.

The Sovereign Doctrine

Version 3.0 codified rules that previous versions had learned through failure. These became non-negotiable constraints:

No Python on the Windows host. Earlier versions had suffered from ghost processes: duplicate scripts running outside the controlled Docker environment, causing conflicts that were difficult to trace. Version 3 enforces a strict boundary where Windows serves only as a viewer, and Docker serves as the engine.

No pip install as a permanent fix. I repeatedly encountered restart amnesia: installing a library inside a running container, confirming it worked, then watching the fix vanish after a reboot because the dependency was never added to requirements.txt. Every dependency now requires a formal addition and a full container rebuild.

No mocking data. If a sensor fails, the interface must show the failure. The dashboard exists to reflect physical truth, and placeholder values that make the interface look complete while hiding errors violate the system's purpose.

No sharded model weights. Internal tools perform poorly with fragmented weight files, so the protocol requires single-file safetensors for all local deployments.

These rules exist because I broke them in past versions. Each constraint traces back to a specific failure that cost time to diagnose and fix. Writing them down transformed tribal knowledge into system architecture.

The Interface

The frontend organises around seven tabs (refined from earlier iterations), each serving a distinct function within the command centre:

The Bridge provides system status via four semicircular gauges that display CPU load, RAM usage, GPU load, and GPU temperature. The telemetry polls every one to two seconds, reflecting the physical state of the hardware in real time. This is where I check the machine's heartbeat before starting heavy inference work.

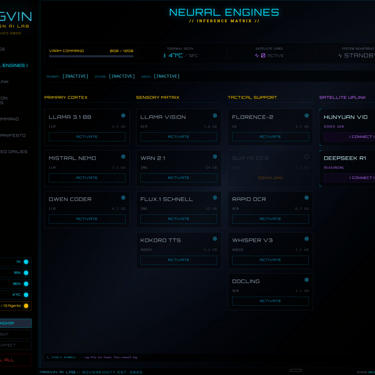

Neural Engines presents the Inference Matrix: a four-column layout categorising every available model by function. Primary Cortex hosts large language models (LLaMA 3.1, Mistral Nemo, Qwen Coder). Sensory Matrix includes vision and image generation models (Llama Vision, Wan 2.1, Flux, Schnell, Kokoro TTS). Tactical Support holds specialists (Florence-2 for captioning, Rapid OCR and Docling for document processing, Whisper V3 for transcription). Satellite Uplink hosts remote models accessed via API connections (Hunyuan Vid, Deepseek R1), distinguished by purple accents to indicate they operate outside the sovereign boundary.

The colour system provides immediate status recognition: cyan indicates local models available for activation, amber indicates models currently downloading or provisioning, and purple indicates models requiring an external connection. Each model card displays its VRAM footprint, allowing me to calculate load order before committing GPU memory.

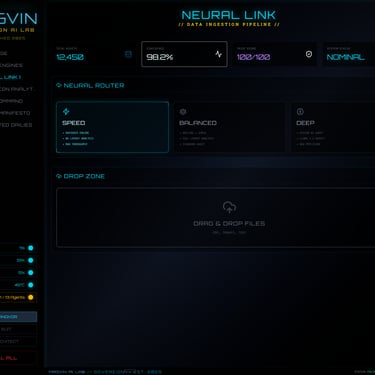

Neural Link handles the data ingestion pipeline, featuring a drop zone for documents and a Neural Router offering three processing modes (Speed, Balanced, Deep) depending on required accuracy. Panopticon Analytics visualises the knowledge graph as an interactive 3D node display. Agent Command will orchestrate multi-agent workflows once the foundation is complete. The System Manifesto documents the configuration and serves as the source of truth for the system state. Restricted Dailies is reserved for operational analytics.

The tabs beyond Neural Engines serve as scaffolding: interfaces designed and placed, awaiting backend wiring. This reflects an intentional approach. Build the architecture correctly before connecting everything.

Three Modes of Operation

The interface supports three operational modes, selectable from the sidebar:

Neon Angkor is the daily driver, presenting the full interface with live telemetry and model controls optimised for regular use.

The Suit provides identical functionality with a colour scheme appropriate for professional presentation. If I demonstrate the system to external stakeholders, this mode delivers the capability without the cyberpunk aesthetic.

The Architect enables full configuration access. Every editable parameter gains a small edit button; every component gains an information tooltip explaining its function, wiring, and role within the system. This mode exists because I knew I would step away from the project and return later without full context. When that happens, I can hover over any element and read exactly what it does, what it connects to, and why it exists. The interface becomes its own reference manual.

Building these modes required additional frontend work, but the investment addresses a real problem. Complex systems become opaque over time, and even the architect who built them can forget the reasoning behind specific decisions. Embedding documentation directly into the interface means the knowledge travels with the system rather than living in separate files that might never be reopened.

What Version 3.0 Delivered

Version 3.0 proved that the interface architecture works. The FastAPI backend serves typed, inspectable endpoints. The React frontend renders exactly what I specify without framework interference. The sovereign doctrine provides guardrails that prevent the classes of failures that plagued earlier versions.

The Neural Engines tab demonstrates the concept in practice: every available model visible at a glance, organised by category, displaying VRAM requirements and current status. I can activate models knowing exactly what resources they will consume. The interface behaves as a command centre should.

Version 3.0 is not complete. The Bridge displays real telemetry; the Neural Engines tab is substantially wired; the remaining tabs await their turn in the development sequence. I am building methodically, verifying each connection before moving forward, because the goal is a system I can rely on rather than a demo I can show.

The Lesson

Five versions of MagVin Desk and two versions of MagVin Lab preceded this build. Each taught something: that monolithic architectures collapse under their own weight; that virtual environments isolate but do not scale; that framework ceilings exist independent of coding skill; that quality is a first-order constraint; that simplicity often outperforms complexity—version 3.0 benefits from all of it.

The lesson here is to trust the process. Each prior failure contributed to the doctrine governing this version. Each pivot refined the system's requirements. The architecture I am building now would have been impossible to design at the start because I lacked the knowledge that only comes from building the wrong thing several times first.

Version 3.1 will continue the wiring. The foundation holds.